A few weeks ago Qualcomm announced their dedicated inference chip called the Cloud AI 100. SemiAccurate thinks this chip is going to be a big deal for several reasons above and beyond the tech inside.

A few weeks ago Qualcomm announced their dedicated inference chip called the Cloud AI 100. SemiAccurate thinks this chip is going to be a big deal for several reasons above and beyond the tech inside.

Lets start out by saying what this chip is, it is a dedicated server inference accelerator, not a training chip. It does the same job as your cell phone does when your camera puts a box around a person for face detection or the invisible mode setting when you take a pic. The Cloud AI 100 (hereafter 100) just does it much faster and presumably more efficiently.

Inference vs phones

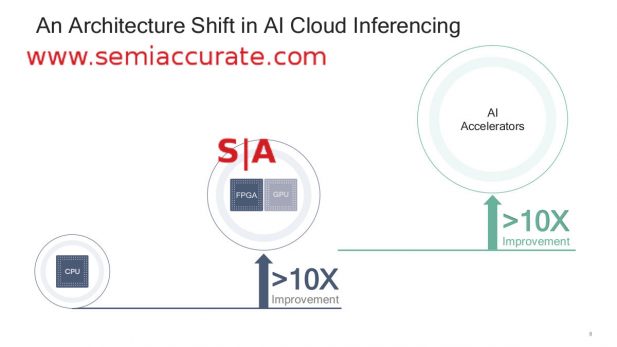

CPU power needed for inference is relatively small per task, if your cell phone can do it on every frame of a 4K video in realtime as some can do now, you don’t need a fire breathing 300W card to do it in the cloud. That said your phone can’t do everything you may want on a 4K video in real time but we are getting closer every generation. Those fire breathing 300W cards in servers can do everything at far faster rates than realtime or more likely do a good enough job for hundreds of users at the same time.

CPU, GPU, or inference device?

Workloads for inference are nearly endless, Facebook for example uses it to identify users in uploaded pictures. And to target ads to you. And probably more nefarious things. Your phone does face detection but not subject recognition, yet, but more importantly uses AI to tweak settings in way that are totally transparent to you. For jobs that require far more horsepower than an edge device can provide, the phone or IoT box recognizes an area of interest then sends that area to the cloud for more processing. This saves power and bandwidth, it is a classic data vs compute locality issue.

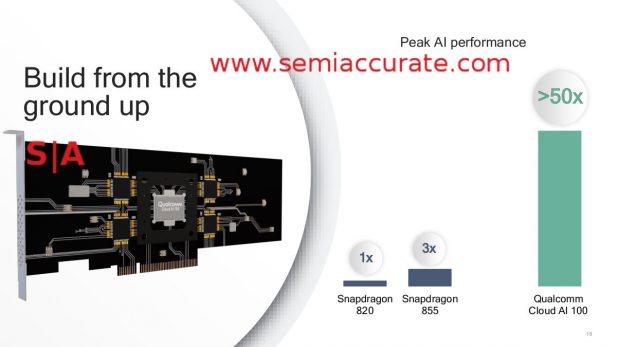

So what is Qualcomm announcing? A 7nm part that is >50x the AI performance of a Snapdragon 820. Details? Not yet other than shipping by the end of the year so stay tuned, they won’t be silent for long. Who wants the 100? Facebook and Microsoft gave some pretty glowing speeches about the part during AI day so those seem good candidates, and if it is as good as Qualcomm claims, they won’t be the only two.

Author’s note: This announcement made me very angry, not for what was said or not said but how it was covered. It seems many sites and analysts don’t have a clue about the difference between training and inference much less why there are differing hardware needs for each job. They then go on to proclaim that the Cloud AI 100 will, “kill X or Y and is a good fit for Z” based on a technically ignorant premise. Aaaargh! Rant over.

So we have an AI chip that has >50x the inference performance of a Snapdragon 820 and >16.66x the performance of the current Snapdragon 855. It is built on a, but not necessarily the same, 7nm process so efficiency should not be too far off that of the current 855. With one more key piece of information that Qualcomm inadvertently given away, you can tell a lot about this device.

Note: The following is for professional and student level subscribers.

Disclosures: Charlie Demerjian and Stone Arch Networking Services, Inc. have no consulting relationships, investment relationships, or hold any investment positions with any of the companies mentioned in this report.

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Qualcomm Is Cheating On Their Snapdragon X Elite/Pro Benchmarks - Apr 24, 2024

- What is Qualcomm’s Purwa/X Pro SoC? - Apr 19, 2024

- Intel Announces their NXE: 5000 High NA EUV Tool - Apr 18, 2024

- AMD outs MI300 plans… sort of - Apr 11, 2024

- Qualcomm is planning a lot of Nuvia/X-Elite announcements - Mar 25, 2024