A few days ago, we took a look at the Bulldozer core architecture, now lets walk through the rest of the chip. With that, here are the parts that do are everything else, collective known as the uncore.

A few days ago, we took a look at the Bulldozer core architecture, now lets walk through the rest of the chip. With that, here are the parts that do are everything else, collective known as the uncore.

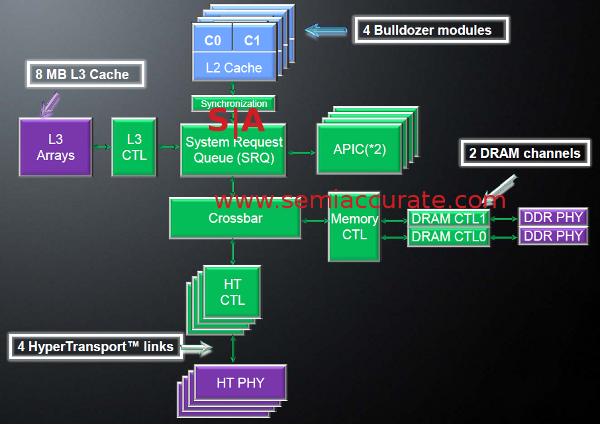

If you look at the Bulldozer die plot, you can see that the cores/modules, inclusive of L2 cache, only take up about half of the die area, the rest is called the uncore for obvious reasons. The most important part of that uncore is the integrated northbridge, basically the memory controller, L3 cache, and cache controller. The other big items include the System Request Queue (SRQ), crossbar, APICs, and the four Hypertransport (HT) controllers.

Northbridge in detail

The heart of the northbridge is the SRQ and Crossbar, together, they make sure every bit that goes on and off the CPU gets to the right place at the right time. The SRQ takes inputs from the cores, L3 cache and APICs, and makes sure nothing steps on the next request. Interestingly, it not only takes requests from the local cores, but also from remote cores via HT links.

Although it may not look like it in the diagram, if a request comes in from a remote CPU for data on locally attached memory, the SRQ will arbitrate this request, along with local requests, in whatever order AMD has deemed correct. All of these bits flow through the crossbar, something that is basically a big switch. Given how AMD broke the parts out, it looks like the crossbar is pretty ‘dumb’, all the intelligence is in the SRQ.

In multi-socket systems, this should theoretically allow quite a bit more efficiency and scaling, if the SRQ is even nominally aware of memory addresses owned locally and remotely, it can easily skip the bandwidth consuming requests to the local memory controller for addresses that are obviously not local. With luck, this will ease much of the socket scaling problems of the early K8 and K10h cores by eliminating some cache coherency traffic.

One interesting footnote here, if you take a look at the size of the uncore in Bulldozer and Intel’s Sandy Bridge, you can see that the AMD method takes a larger percentage of the die than Intel’s ring bus. How well both scale up, and what the latencies are for both schemes will have to wait until someone does head to head server benchmarks with multiple socket systems. That said, Intel’s tradeoff seems to put more of the on-chip interconnects in metal layers.

Bulldozer has two new memory controllers capable of running DDR3/1866 natively. They support unbuffered, registered, and Load Reduced DIMMs (LR-DIMMs) at 1.50, 1.35 and 1.25V. LR-DIMMs and 1.25V memory are just starting to hit the price sheets, so it looks like all of the goodies should be available in the near future. In addition to the new specs, this new controller is much more aggressive in power savings. It is heavily clock gated, and can slow down both to save power and to lower temps if something goes wrong. The list of tricks that it can pull is quite long, and this should pay dividends in the server space for light loads.

The DDR3 controllers are 72-bits wide for ECC protection, as is the L3 cache. That cache can be up to 8MB, and is physically split in to four chunks, but is seen as one block. All of addresses have the same latency unlike Sandy Bridge in which latency depends on the number of ring stops. The L3 can be partitioned to serve as a cache for coherency traffic in multi-socket systems. Depending on need, a chunk of the cache can be set aside, and the memory controllers will only put coherency data there. When in this mode, it that chunk of cache is called the probe filter, something first seem in the Istanbul chip line.

Next up is the Hypertransport controller, slightly beefed up from the last generation AMD parts. In Bulldozer, there are four controllers, all 16-bits wide per direction, running at 6.4 gigatransfers/second. That translates to 12.8GBps per link, per direction, or 51.2GBps per direction per socket. Depending on the socket, AM3+, C32, or G34, some of these links may not be exposed, and some may not pass coherency data.

Those links can be split in to two 8-bit links, and in multi-die packages, Interlagos and Magny-Cours for older cores, they do just this. If you look at the four socket system diagrams, everything looks fully connected. Since the 16 core CPUs are two 8 core dies interconnected with HT links, there aren’t quite enough to make every die fully connected to every other die. You can see the diagram here, with the worst case scenario being one hop of latency. This is a classic trade-off of package pin counts for latency, but with an average diameter of 1.25 hops, it isn’t going to hobble the system.

The HT links all have CRC protection and can retry errors. Like the memory controllers, the HT links can also scale back to conserve power, something that isn’t new to AMD chips, but is more advanced this generation than the last. The take home message is that HT can scale width and frequency dynamically as needed, and do so more often than before.

Speaking of power management, Bulldozer brings AMD cores right up in to the modern age, taking things a bit farther than Llano did. If you read the Llano details, the best way to put it is that Bulldozer has the same features, digital power measurement mainly, but they were baked in from the start, rather than retrofitted to an existing core. This control is now extend to the uncore, something that Llano didn’t do much of, and every block is heavily clock gated in a more granular fashion.

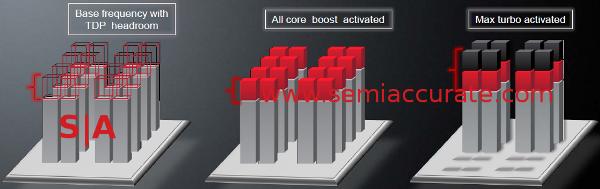

Of the new tricks, the biggest one is called Max Turbo. The idea behind this mode is to allow individual cores to go above the maximum turbo allowed when there is TDP headroom. It works in conjunction with the core C6 (CC6) sleep mode. CC6 is where the lightly used modules flush their caches, save state to a special local chunk of memory, and then have their power shut off. No power means no leakage.

Max Turbo means half go faster

With turbo, current Bulldozer chips can scale all cores up 300MHz when TDP headroom permits. With Max Turbo, if half of the modules are in CC6, the remaining cores can clock up even more. Once again, current chips can clock up another 600MHz, so with Max Turbo enabled, a Bulldozer can boost it’s speed 900MHz. Some SKUs are limited to less frequency, but the cap for all of them seems to be 4.3GHz. Why?

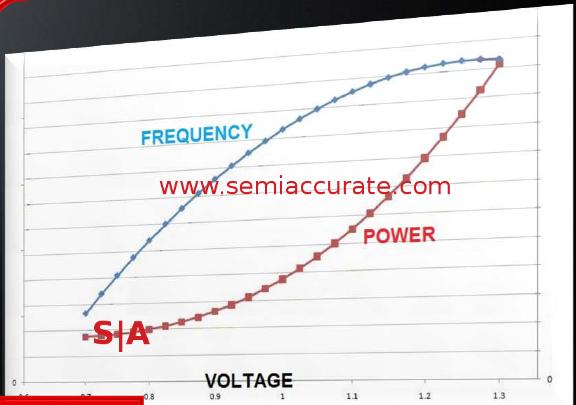

Note the leveling off of frequency

The diagram above shows off the problem with boosting frequency, increases tail off quickly with added power. After a while, you are basically burning wattage for almost no gain in performance. For the initial crop of Bulldozer parts, it looks like that curve flat spots at 4.3GHz, but as we have seen, these chips will clock to record levels if prodded appropriately.

One thing to note, the CC6 state is per module, a single core shares too many resources to put half of it in to CC6 with the other awake. It will be interesting to see what happens if the tools to manually control some of these features are released, there are a lot of knobs for geeks to play with on Bulldozer.

In the end, the uncore looks very familiar to the AMD uncores of the past. On a macro level, the biggest difference is that the northbridge now runs at a fixed frequency, 2.0 or 2.2GHz vs a multiple of the core clocks. Between this and a higher minimum core clock, 1.2 or 1.4GHz depending on exactly which model you are talking about, the complaints about wake time on older chips should be a thing of the past. Taken together, the uncore doesn’t look incredibly different, but as with the core, the devil is in the details. It is a completely new uncore, and nothing seems to be left untouched, but it is definitely evolutionary.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Nuvia Founders Have A New Startup, Nuvacore - Apr 16, 2026

- Nvidia Is Negotiating To Buy A Large PC Oriented Company - Apr 13, 2026

- Dell Does Modular Laptop Boards Right - Apr 7, 2026

- Qualcomm Snapdragon X2 Review Embargo Lifts Tomorrow Morning - Apr 6, 2026

- Intel Cuts HDR OLED Panel Power with SmartHDR - Apr 2, 2026