Last week Qualcomm, Xilinx, and Mellanox all teamed up for a server announcement. The significance of it is not the hardware shown but the partnerships and what they infer.

Last week Qualcomm, Xilinx, and Mellanox all teamed up for a server announcement. The significance of it is not the hardware shown but the partnerships and what they infer.

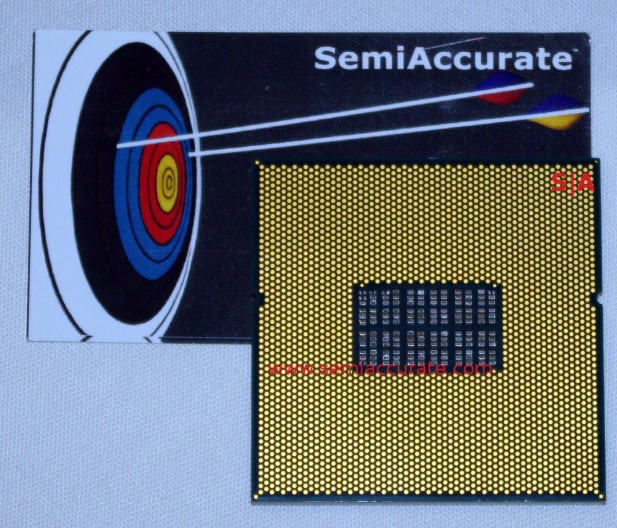

The news that grabbed headlines for the day was of course that Qualcomm not only announced a server CPU but showed off a prototype part and a working cluster of servers. The CPU itself is pretty nondescript, it has 24 cores of an unnamed custom server design that they claim is not this one even if they didn’t acknowledge the code name itself. The top of the CPU is completely bare without a single mark or number, the underside is all pins and passives as you can see below.

Qualcomm’s prototype server CPU

This prototype has 24 cores working at an undisclosed frequency, that is about as technical as Qualcomm got. They did specifically say that the prototype 24-core variant would not be going into production, the release parts would be both higher and lower counts and utilize differing pinouts. No clue what that could mean, higher and lower counts could cover quite a range of performance targets don’t you think? Either way the server itself looks like this.

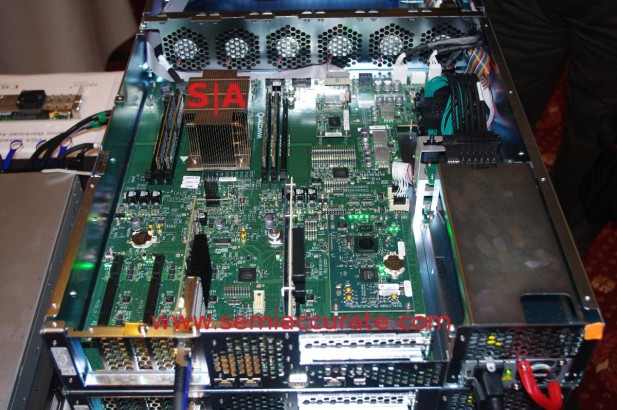

The ARM server in question

There are a few things you can see from the picture above, the most obvious is that this CPU, at least in this platform, is a two channel DDR4 device. We say that because there are six DIMM slots of which two are filled. If the device below is a three channel SoC it is unlikely they would only populate four of the channels. For the pedantic DIMM slots are numbered 0-5 with slots 1, 2, 4, and 5 occupied, 0 and 3 open. It could be 2 of 3 channels filled, the pin count certainly supports that many channels, I’ll leave it up to you to decide which way things go.

Additionally the server CPU has PCIe on die, in this case it looks a lot like 32 lanes of presumably PCIe3 but don’t rule out PCIe4 on the shipping devices. Silicon is available from Synopsys at the moment, the other half of the demo we didn’t show you at IDF was it interoperated with working PCIe4 silicon from Mellanox. Why is this important or even relevant? Mellanox was one of the two close partners at the event and 100GbE cards could make good use of the bandwidth. A PCIe3 8x slot offers 64Gbps of bandwidth, a PCIe4 8x slot doubles that to 128Gbps. One of these slots will support a 100GbE card, the other won’t….

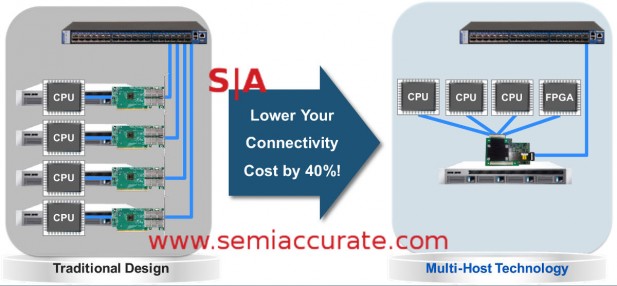

Mellanox ConnectX 4 NIC tech

Not entirely coincidentally Mellanox was showing off their ConnectX 4 NIC which will do 10-100GbE. The interesting part about this adapter is that it is made for Open Compute sleds by way of their multi-host technology. This allows up to four discrete CPUs to all share one adapter but more hosts are promised in future generations. The demo systems shown off were talking to a Mellanox switch at 40Gbps which again strongly suggests PCIe3 on the board. This pairing is going to be ready for Open Compute datacenters from day one.

That brings us to the last of the partners, Xilinx. They showed off an FPGA board although it was not in the demo systems, and promised they would be compatible with Qualcomm ARM server systems from day one too. If you look at the way the server market is going, specifically the economics thereof, it is clear FPGAs are going to play a major part. If you don’t think so, ask yourself why Intel just paid a premium for Altera, it wasn’t just to fill their fabs. Xilinx adds a lot to the partnership especially if the tools and software suites are there from launch.

So that is what Qualcomm brought to the table, a complete offering of server CPUs, connectivity, and programmable logic. Prototype boards have been shipping for a while now and the real systems will be announced next year. By that time the tires should have been kicked, the bugs on the software and networking side mostly quashed, and the customers all ready to deploy. If you had any doubts about the readiness of ARM servers, Qualcomm, Mellanox, and Xilinx working together should dispel that.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Nuvia Founders Have A New Startup, Nuvacore - Apr 16, 2026

- Nvidia Is Negotiating To Buy A Large PC Oriented Company - Apr 13, 2026

- Dell Does Modular Laptop Boards Right - Apr 7, 2026

- Qualcomm Snapdragon X2 Review Embargo Lifts Tomorrow Morning - Apr 6, 2026

- Intel Cuts HDR OLED Panel Power with SmartHDR - Apr 2, 2026