Note: This is Part 2 of 2, Part 1 can be found here.

![]() The three most pressing problems of modern silicon design: power, I/O pins, and yields can all be addressed by chip stacking. A silicon on silicon 3D stack allows a designer to make interconnects at the scale of structures on the chips, you simply draw them like you would a metal layer. Connecting the same die to an organic or ceramic carrier would make the bumps larger by an order of magnitude, the minimum size there is much larger than that of silicon on silicon. As Nvidia found out with Bumpgate, thermal stress and other factors mandates this size difference.

The three most pressing problems of modern silicon design: power, I/O pins, and yields can all be addressed by chip stacking. A silicon on silicon 3D stack allows a designer to make interconnects at the scale of structures on the chips, you simply draw them like you would a metal layer. Connecting the same die to an organic or ceramic carrier would make the bumps larger by an order of magnitude, the minimum size there is much larger than that of silicon on silicon. As Nvidia found out with Bumpgate, thermal stress and other factors mandates this size difference.

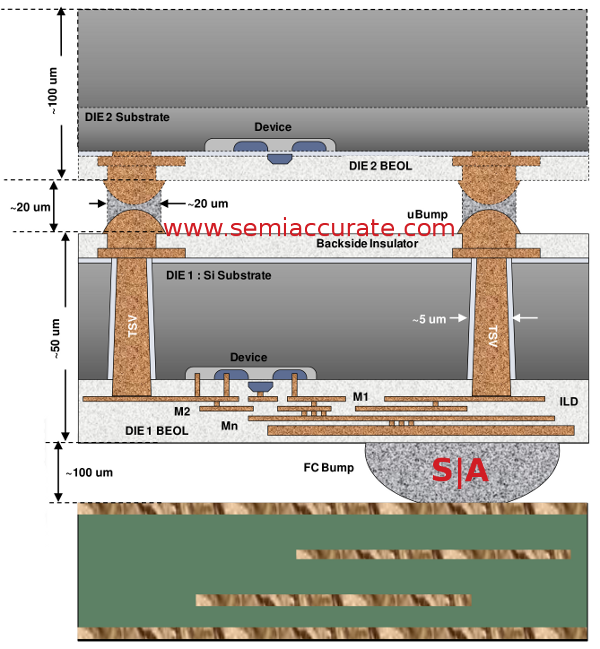

Note that a human hair is ~100 microns

The picture above is a cross-section of a memory on logic stack from the Qualcomm presentation. As you can see, the size of the die to die ‘microbumps’ is ~20 microns, the die to package bumps are 5x larger at 100 microns. The package to board balls/bumps are 5x larger than that, so 500 microns or so. This 1:5:25 ratio was consistent across the talks from all five companies, but the absolute values vary with the process used and intended markets. The short story is given the same area, you can fit 25x the connections in a stack than you can to a carrier. Add in the far lower thermal stresses because of very similar materials, and you have a win for stacking.

If you have multiple dies, not only can you get 25x the pins, but the wires are thinner, shorter, and potentially made from better quality material. This lowers the RC (Resistance Capacitance) mentioned previously by large amounts, lowering power used. In aggregate, 25x the I/Os between chips in a stack can consume far less net power than a fraction of that bandwidth going off package.

Xilinx, the one company that is producing stacked chips in volume, has one variant called the Virtex 7 2000T. This uses four FPGA slices on an interposer, and the number of connections between slices is listed as, “>10K”. The chip has a 45 x 45mm package, so the 1mm ball pitch would mean a maximum of 2025 pins out. For reference, the latest Intel Xeon CPUs have 2011 pins, so Xilinx is not way out of the mainstream here. Instead of going down in count, the first stacked chip on the market brings a 5x increase in I/Os, and progress is moving toward yet higher densities very quickly.

In addition to the I/Os and power, a company gets all sorts of yield advantages from stacking. You can connect multiple smaller dies that each yield far higher than a large monolithic part. Better yet, you can put in many different types of chips, even ones made on incompatible processes. Logic and DRAM? No problem. Bleeding edge process logic coupled with an analog chip made with the process equivalent of crayons on toilet paper? Easy. Throw in high voltage I/Os on a different die, and you have a worst case that is probably impossible to do on a monolithic part. Better yet, the latency between dies is far better than going off socket, a massive gain for memory bandwidth even without taking the potentially increased widths in to account. Yes, it is the best of both worlds.

To throw some cold water on this happy picture, we should point out that there are problems. The first of which is that, well, 3D parts can’t be made in high volume yet. There is talk, there are proposed solutions, but no one is doing it at the moment, Xilinx is only 2.5D. This will change in a hurry, there have been some prototypes spotted here and there, and Intel is about to talk about Crystalwell next week even if the Ivy Bridge variant never made it to market.

What are the problems preventing stacking from coming to market? There are many, from construction to testing to cost, there are many show stopping technical hurdles that have not been solved yet. They are all being worked on by many bright people, and with each passing day serious progress is being made. Lets look at some of the big issues keeping us from this technical nirvana.

First up is a problem called known good die. In short, it is really hard to test a die before you make it in to a functional device. This is a problem everywhere, but with a stacked part, a large stack can be ‘killed’ by a single bad die. Imagine a DRAM die with a 95% yield. If you stack eight of them, .95 ^ 8 ~=.66, so 5% bad becomes 33% bad, and that is before any assembly losses. A bad $1 DRAM can make a $2000 FPGA in to a keychain instead of a saleable part, not a good thing.

To complicate matters more, if you have a foundry making your chips, a DRAM supplier giving you memory, and a packaging house putting it all together, who is at fault for a bad device? Who pays? Who tests it? What if parts are not testable before assembly? This economic reality tends to preclude anything other than test runs and low volume, high sale-price devices. The Virtex 7 2000T is not a cheap device.

The known good die numbers above assumes that assembly has a 100% success rate, something that even modern single die devices don’t achieve. To do even a 2.5D stack, you add literally dozens of steps to the manufacturing process, and while each has a very high success rate, together they add up to a large failure rate. Again, progress is being made, but unless you have a very high end product, stacking is not a good option.

One possible solution is repair-ability after a stack has been manufactured. Some chips, FPGAs and memory, are particularly amenable to this. For that reason, you see stacked memory coming in the short term, and Xilinx is already on the market. Logic on Logic, Memory on Logic, and other things that do not include highly repairable or pre-manufacturing testable dies are much farther out. Once again, we are not there yet, but progress is being made.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Nuvia Founders Have A New Startup, Nuvacore - Apr 16, 2026

- Nvidia Is Negotiating To Buy A Large PC Oriented Company - Apr 13, 2026

- Dell Does Modular Laptop Boards Right - Apr 7, 2026

- Qualcomm Snapdragon X2 Review Embargo Lifts Tomorrow Morning - Apr 6, 2026

- Intel Cuts HDR OLED Panel Power with SmartHDR - Apr 2, 2026