At Hot Chips 24, AMD’s Mark Papermaster gave a keynote speech that had a few technical tidbits in it. Lets take a look at two of these in particular, the Steamroller core and high density libraries.

At Hot Chips 24, AMD’s Mark Papermaster gave a keynote speech that had a few technical tidbits in it. Lets take a look at two of these in particular, the Steamroller core and high density libraries.

There was a lot more to the speech, but since that is marketing, buzzwords, and related fluff, we will spare you a rehash of it. The only phrase that you really need to know is “Surround Computing”, AMD’s term for computing all around you, hopefully transparently. It is not just a five monitor game of Generic FPS #12: Wallet Lightening DLC Conveyance Addendum played in a dark room to tan both ears at once. Expect to see Surround Computing used a lot in future messaging from AMD.

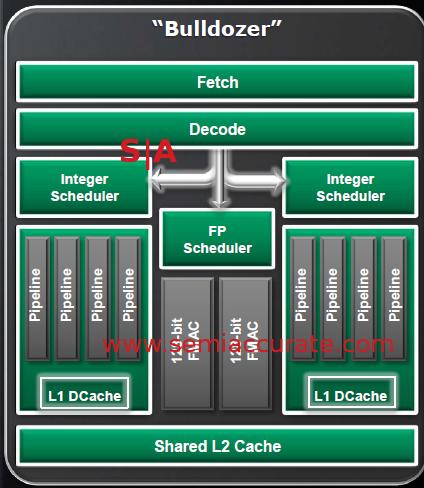

Back to the interesting stuff, the Steamroller. If you recall, AMD’s cores are named Bulldozer, Piledriver, Steamroller, and Excavator. Bulldozer is out on the market in FX and Opteron guises, and Piledriver came out as the core in Trinity. The next variant is Steamroller, and that won’t come out until either Kaveri or the 2013 Opterons/FX chips break cover. Bulldozer was a radical architectural change from the status quo, it had a shared front end, shared FPU, and two distinct integer units that were somehow called ‘cores’. Piledriver cleaned up a lot of what made Bulldozer underwhelm, but the fundamental problems that hamstrung Bulldozer didn’t go away.

Bulldozer block diagram

If you recall, that shared front end was supposed to be fast enough to feed both cores without bottlenecking either one. It wasn’t. It was supposed to have so much capacity that when one core was idle, the second would positively fly. It didn’t, but it did fall less flat with one unit idle, far less flat. The shared front end did the silicon equivalent of what that guy in the hockey mask does to wayward teenagers wandering outside of that cabin in the woods…..

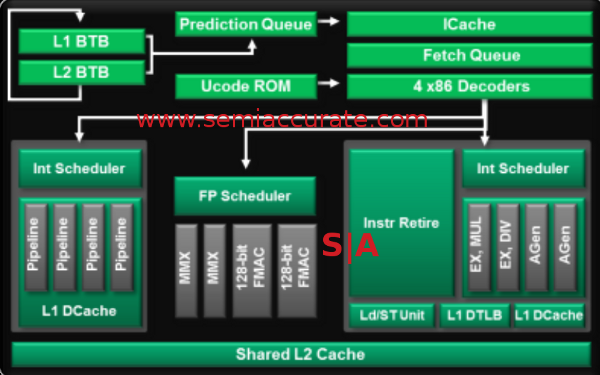

Piledriver doesn’t change much

The second revision called Piledriver fixes a lot of little problems, but can’t touch the architectural ones. If you think of Piledriver as Bulldozer 1.5, that is a far better description than a complete redo, it is simply evolutionary. A lot of things were cleaned up, and the most major change seems to be adding two MMX pipes to the FP unit. In the end, a lot of small bottlenecks were opened up, but that shared front end is still picking off the teenagers who went looking for their comrade, you know, the one that Bulldozer’s decoder got.

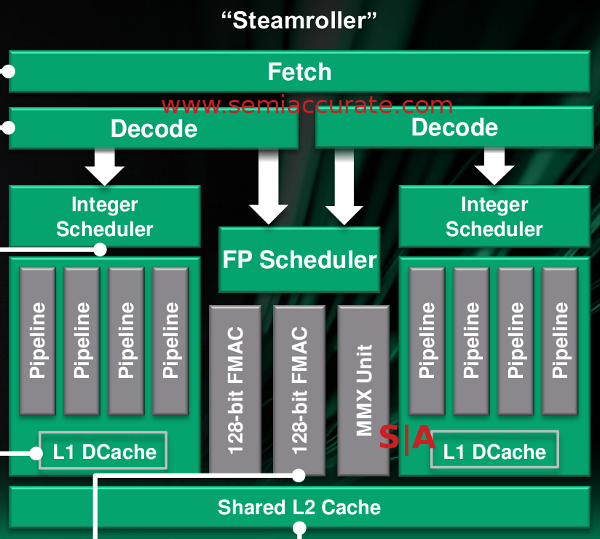

That brings us to the latest addition to the line, Steamroller, on paper it fixes a lot. Steamroller is the Bulldozer we were hoping to get a year and a half ago. Had it come out in 2011 instead of 2013, it very well might have set the world on fire, but it didn’t. Steamroller is the one kid of the group that makes it out of the forest alive. Why? Take a look at the front end, and compare it to the two prior architectures.

Steamroller from too far away

There are two things to note, the dropping of one MMX pipe in the FPU, and the two decoders in the front end. The one that matters is of course the decoders, and it explains why the teenager reading computer architecture books made it out of the forest without being strangled, it fixes _THE_ major problem in Bulldozer. No longer are the cores strangled. In theory. Lets wait for silicon before we celebrate, someone in a hockey mask could still pop out of the cake in the last scene.

In a world where CPU architecture people would kill for a full percentage gain in the front end, and one or two fractional percentage gains from different areas are considered a clear win, AMD is claiming a 30% gain in ops delivered per cycle and 25% more max-width dispatches per thread. In short, they did the obvious, and it did the obvious, but 30% is a massive gain that is hard to understate.

If nothing else gets in the way to hamstring performance, and at this point we would be fairly surprised if something did, then Steamroller should bring about a massive performance gain in single threaded code. To make things better, it is unlikely to fall flat when the second core in a pair is doing something strenuous like hosting a solitaire game. On paper, this is what we have been waiting for.

That brings up the other point, 30% is borderline crazy for an increase, especially one that directly relates to performance. The decoders were the main bottleneck in the architectural paradigm up to this point, so most of that should carry over to the end user on single threaded code. The problem? What was the starting point again? Oh yeah, not so hot. 30% increase in IPC from a current Intel core would be greeted with blank stares and incredulous looks from people who understand the tech. 30% from Bulldozer’s starting point is just enough to get AMD back in the game. That said, it’s about time.

That brings us to the other end of the spectrum, high density libraries (HDL). As their name implies, they are libraries used to design chips, and they prioritize area over speed. Bulldozer has never had a problem with raw clock rate, in fact it is the current world record holder in that regard. Piledriver will undoubtedly go faster on a raw clock basis, and likely have better IPC while doing it, but those chips are not out yet. The take home message is that raw clock rate is not a big problem for this architecture.

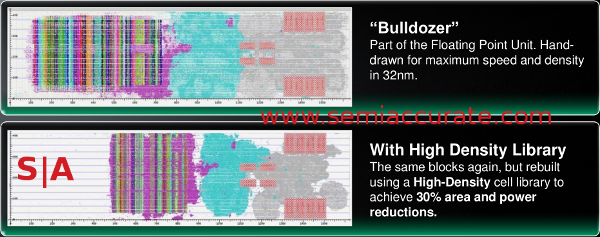

Before and after a button press

With that in mind, the HDL slide was rather interesting. AMD is claiming that if you rebuild Bulldozer with an HDL library, the resulting chip has a 30% decrease in size and power use. To AMD at least, this is worth a full shrink, but we only buy that claim if it is 30% smaller and 30% less power hungry, not 30% in aggregate. That said, it is a massive gain with just a button press.

AMD should be applauded, or it would have been, but during the keynote, the one thing that kept going through my mind was, “Why didn’t they do this 5 years ago?”. If you can get 30% from changing out a library to the ones you build your GPUs with, didn’t someone test this out before you decided on layout tools?

I am not a CPU architect, nor am I an EE, but it doesn’t take that much of a leap of logic to see how a simple test like this would be worth investigating. No, not a full layout of the new part, but a simple, coarse grained stab at the concept with a once over analysis to see if it moved the needle in the right direction. Time, resources, and internal bandwidth are always in short supply, but you would think a simple glossing over of the concept would have noticed the potential if doing it for real gains 30%.

Like the split decoder, the HDL idea seems to be one that comes too late. There are probably really good technical and logistical reasons for both not coming years sooner, but on paper, it is such a massive gain that you have to wonder. Between the two ideas, Steamroller and Kaveri look to be damn good parts. Trinity is better than anything Intel can make for consumer uses, but that is in spite of the core, not because of it. With a more than incremental leap in both the core and the layout, AMD looks to be on the right path. Lets see what happens when we have Steamroller based silicon in hand.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Nuvia Founders Have A New Startup, Nuvacore - Apr 16, 2026

- Nvidia Is Negotiating To Buy A Large PC Oriented Company - Apr 13, 2026

- Dell Does Modular Laptop Boards Right - Apr 7, 2026

- Qualcomm Snapdragon X2 Review Embargo Lifts Tomorrow Morning - Apr 6, 2026

- Intel Cuts HDR OLED Panel Power with SmartHDR - Apr 2, 2026