AMD has been ramping up their tools effort of late to support GPU compute on the server-side and today it is Java’s turn again. As SemiAccurate mentioned a while ago, AMD is not just making slides about servers, they are really making tools.

AMD has been ramping up their tools effort of late to support GPU compute on the server-side and today it is Java’s turn again. As SemiAccurate mentioned a while ago, AMD is not just making slides about servers, they are really making tools.

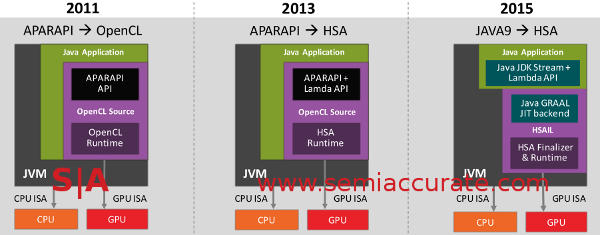

Two years ago, AMD announced that the Aparapi API could target OpenCL so that one could explicitly compile Java to a parallel environment that was GPU aware. Essentially Java in, OpenCL bytecode out. While this is useful it is hardly a killer app and even AMD admitted this was only the first step. Today they are releasing Step 2 and talking about Step 3.

The progression from Java hack to native

The big news is that Java 8 is now HSA aware through Aparapi and you can use Java Lambda expressions to represent GPU targeted code. Better yet this is all native, there is no need to modify the JVM, everything is in external AMD provided libraries. Step 2 is effectively going from Java 8 Lambda expressions to OpenCL and HSA through Aparapi. While this is better than Step 1 it is still not transparent fire and forget Java to GPU.

Luckily for the code monkeys this will be coming in 2015 which says Java 9 to the author. This step does away with Aparapi and makes the connection from Java Lambda directly to HSA through something AMD and Oracle call Project Sumatra. Sumatra will allow some constructs to be directly sent to the GPU by the JVM.

This is the direct Java to GPU path that is considered by some to be the holy grail of heterogeneous compute. That said it won’t be automagical but it should be a big step in the right direction. If you are writing code on this level you should have a fairly good idea of what to target the GPU with and which parts to avoid. If not, start here and work your way up.

Who is making the tools to do all of this? AMD says that they, along with Oracle are doing the work on Java itself, Suse is assisting AMD with the GCC side of things to target HSA with that suite. PGI is putting OpenACC compiler directives in their tools, AMD has a MathCL library for hardcore numeric work, and ArrayFire is putting out clMath for similar purposes. On top of this AMD has a full tool suite called CodeXL 1.3 they are announcing for Windows and Linux but unfortunately the briefing on that wasn’t Linux compatible so we can’t tell you more on that, sorry.

In the end it looks like AMD is finally taking tools and libraries seriously. Some companies announce tools, projects, and efforts that show lots of promise only to never be mentioned again a few months later. When AMD announced their first tools suite and library efforts a few years ago it was an open question as to which way they would go. From the look of things today we are cautiously optimistic that these objectives are real and will remain viable for the mid-term at least. This could be quite interesting for the server world before all is said and done.S|A

Have you signed up for our newsletter yet?

Did you know that you can access all our past subscription-only articles with a simple Student Membership for 100 USD per year? If you want in-depth analysis and exclusive exclusives, we don’t make the news, we just report it so there is no guarantee when exclusives are added to the Professional level but that’s where you’ll find the deep dive analysis.

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Nuvia Founders Have A New Startup, Nuvacore - Apr 16, 2026

- Nvidia Is Negotiating To Buy A Large PC Oriented Company - Apr 13, 2026

- Dell Does Modular Laptop Boards Right - Apr 7, 2026

- Qualcomm Snapdragon X2 Review Embargo Lifts Tomorrow Morning - Apr 6, 2026

- Intel Cuts HDR OLED Panel Power with SmartHDR - Apr 2, 2026