Last week AMD held Core Day where they finally explained to the public what their strategy going forward is. For those still unsure it involves x86 and ARM cores, and yes it does make sense to SemiAccurate.

Last week AMD held Core Day where they finally explained to the public what their strategy going forward is. For those still unsure it involves x86 and ARM cores, and yes it does make sense to SemiAccurate.

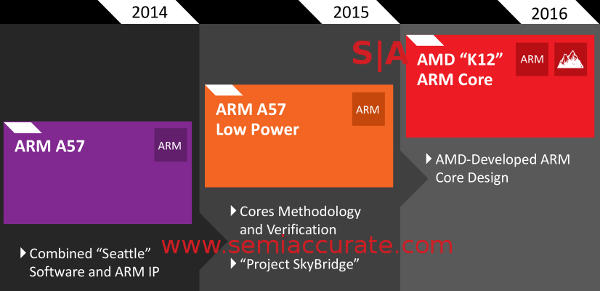

The main announcement from Core Day was one of the worst kept secrets in the semiconductor world, AMD has an ARM architectural license and is developing their own core. This new ARM core called K12 is due in 2016 after two generations of SoCs/CPUs that use vanilla A57s. The first of these new A57 chips is in the already announced Seattle, an 8-core 28nm CPU with 8MB of L3 cache. Although AMD would disagree, it should be seen as more of a tire kicking exercise for potential customer than a volume production CPU.

Updated May 14, 2014 @ 11:30am: Changed 20nm to 28nm above.

More of a roadmap than before, less than we want

If you don’t understand the different ARM licenses, take a look at our two-part writeup here and here, AMD is probably starting out with a multi-use license and moving on to architectural. Between the two steps there is a stopgap part that uses “low power” A57s, but if you take a look at the Seattle boards below, the heatsink isn’t all that big. Then again the devil is in the details, most notably clock speeds. AMD is staying so mum here that most people, SemiAccurate included, almost write off the rest of what they say.

AMD’s Seattle server board and Open Compute board

The part code-named SoC15 also has two interesting aspects to it, one is cores methodology and verification, the other is called, “Project Skybridge”. Methodology and verification is the short way of saying that they are using SoC15 and it’s verified A57 cores to test their tools against before they do their own internal ARM core. Having a known good tool chain and surrounding processes does help, need we bring up Barcelona to drive the point home? In any case AMD is doing this the smart way and aren’t shy about telling the world.

Project Skybridge is much more interesting, it uses the same CPU pinouts for both an ARM and x86 variants of the same chip. On die the fabric is the same, helped by the common HSA/FSA interfaces that both AMD and ARM have been talking about for almost three years now.

Don’t think of this as two chips that share a common pinout, think of it as an entire CPU or SoC with everything in common but the cores. Those cores are far less important than the rest of the chip, they are just another swappable component. I would say we exclusively told you about this over three years ago, but well, we did just that. I think the naysayers are laughing at this one because they still don’t understand what is going on much less it’s significance. Hi Didier.

From there SoC16 mainly adds a new custom AMD designed ARM core. Although this may sound like a big deal but it is much less significant than the changes SoC15 bring, that is the hard stuff. Adding K12 to the mix once the Skybridge methodology is in place is more or less a component swap like a GPU or a memory interface. Given how the SoC design is done, it should be a proverbial piece of cake covered sprinkles of 16nm transistors. It will be interesting to see what AMD’s cores can do better than ARM’s A57 or possibly this core.

So the transition goes distinct ARM A57 CPU and x86 platforms in 2014, common socket and more importantly common uncore for the CPU/SoC in 2015, and then custom AMD 64-bit ARM core in 2016. That makes sense and the stepwise transition is a good one to minimize the potential for showstopping bugs and delays. The real question in most people’s minds is why bother? It is a good one, and it is a valid one, and now AMD finally has answers.

Before we get into that issue there is another one that comes up first. That question is why would you bother with a vanilla AMD sourced A57 core rather than a custom one from Applied Micro, or a vanilla version from Samsung, Mediatek, Qualcomm, TI, or any of the other players making ARM server chips? The answer from AMD is twofold, first theirs is ready now, and should ship really soon. AMCC has one ready in the same vague time frame, but theirs is 40nm, AMD’s 28nm, and one look at the respective heatsinks shows why AMD has an advantage here. Then again you would be best off waiting for independent numbers on both before you commit.

More important is AMD’s experience in the datacenter. They have been a serious player in that space for over a decade, the Opteron was launched in 2003 and it took them to the top of this world. They know what it takes to play in this space and that the little things that count more than the big ones. Having a faster chip is all fine and dandy but if you can’t manage it, you are not a contender. The little misses add up to added TCO, enough to usually break a proposal on economic grounds alone. Don’t underestimate how much the non-bullet point factors make a difference, it really is a make or break issue.

AMD knows the ropes here, knows what to do and not to do, and where the pitfalls are. AMD has been there, done that, and screwed up almost every one of the must have items at one point in the past. That said they learned what not to do and how, with luck they won’t repeat those mistakes. Intel plays on another level in this game, but the rest of the ARM contenders are still at the bottom of the learning curve far below where AMD sits. It is a steep curve and AMD sees their current spot as a serious advantage.

Back to the greater question of why anyone would bother with an ARM core vs an x86 core, that is a bit thornier. The most important issue on this front is software, x86 has it, the others don’t. x86 is the gold standard for datacenter ISAs with the entire infrastructure built around it. In the past few months however, ARM is catching up fast with the entire Ubuntu and Red Hat package base now ported over and running on ARM with only small fractions of a percentage of packages not compatible. In short the software is there now.

What this means is that ARM and x86 are now interchangeable on almost every level. It doesn’t matter what you deploy, the software should just work. This is of course true for everything but Windows, but Microsoft is no longer relevant in the datacenter, Linux runs the overwhelming majority of sockets in this space, Microsoft itself and it’s Azure product being the only notable exception. ARM should be seen as interchangable with x86.

That means the only real differentiator is price, or more to the point TCO. TCO is a thorny question that starts with purchase price, then adds efficiency, and gets really nebulous from there. Each situation is different but lets just say none of the ARM CPU vendors will likely charge the hefty premium Intel does. ARM is also seen as more efficient than x86 parts but that is also an open area of debate that relies more on specific usages than any one testable metric. ARM is a player, how strong an offering their core is, is more related to the individual customer’s workload than anything else.

Then comes the last question, why would you want a microserver, or more importantly will it work for your needs. The answer here is unquestionably a definite maybe. Why? We covered that part in detail months ago, you can find the lengthy answer here (Note: For Professional subscribers only, it is a 17 page white-ish paper). There is no right answer here, just the inexorable march of small cores up the stack subsuming more workloads with every increase in performance. Microservers, most notably in ARM ISA guise are there or thereabouts now, and getting better every month.

AMD convergence plans in little detail

Going back to AMD, their ‘ambidexterous’ strategy is simple, offer what buyers want with the same tools, management stack, boards, and everything else surrounding it. If you want big x86 cores, swap them in for a few smaller ARM cores. If you want lots of small independent cores, swap those in too. The rest of the stack doesn’t change, one set of boards, one set of software, and one management console, this is a killer app for the big datacenter buyers.

Buyers can choose from lower or high core counts, high or low single threaded performance, energy efficiency across a range of options, and all at a theoretically lower cost and potentially lower TCO than Intel. In doing so AMD relegates its traditional core product, that would be a CPU core, to another swappable component from a menu of SoC offerings. This is the future of compute and everyone will be doing it before long, AMD is just the first. Just like SemiAccurate said in 2011.S|A

Have you signed up for our newsletter yet?

Did you know that you can access all our past subscription-only articles with a simple Student Membership for 100 USD per year? If you want in-depth analysis and exclusive exclusives, we don’t make the news, we just report it so there is no guarantee when exclusives are added to the Professional level but that’s where you’ll find the deep dive analysis.

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Nuvia Founders Have A New Startup, Nuvacore - Apr 16, 2026

- Nvidia Is Negotiating To Buy A Large PC Oriented Company - Apr 13, 2026

- Dell Does Modular Laptop Boards Right - Apr 7, 2026

- Qualcomm Snapdragon X2 Review Embargo Lifts Tomorrow Morning - Apr 6, 2026

- Intel Cuts HDR OLED Panel Power with SmartHDR - Apr 2, 2026