![]() Today AMD is releasing a new AI focused professional GPU line called Radeon Instinct. This new yellow marked card line will change the game for pricing, openness, and flexibility.

Today AMD is releasing a new AI focused professional GPU line called Radeon Instinct. This new yellow marked card line will change the game for pricing, openness, and flexibility.

SemiAccurate saw these cards last week at AMD’s Sonoma briefings and we were impressed. A lot of the details were already out in the wild, some are not going to be revealed until the Vega release, but some are new and interesting. When you piece them all together, AMD has a pretty impressive offering. Add in Zen, at least in Naples form, and you have one pretty powerful platform to hang GPUs off of.

MI25 above and MI6 below

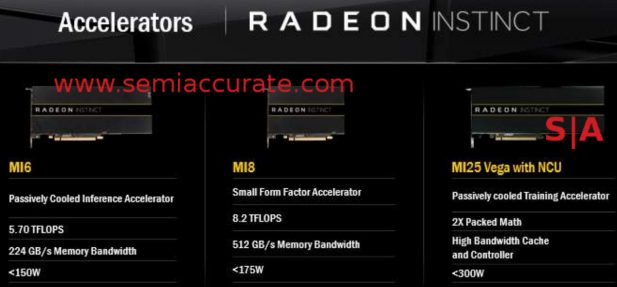

There are three cards in the initial Instinct line, the Polaris based MI6, the Fiji based MI8, and the Vega based MI25. The MI stands for Machine Intelligence and the number is the 16b TF rating, 5.7TF for the MI6, 8.2TF for the MI8, and 25ish but officially unspecified on the MI25. For memory the MI6 has 16GB, MI8 has 4GB, and the MI25 is again unspecified but likely to be 32GB, at least that is a SemiAccurate guess. The architectures obviously cap the memory sizes but the Fiji based MI8 will have very fast HBM so if your algorithms are latency bound, you know what you should pick, yes wait for the Vega based MI25. Bet you thought I was going to say MI8. :P Here are the specs.

Slightly saner counting than MS with Windows

There are two things to point out in particular that are possibly still embargoed but the deck the above slide was taken from specifically say it isn’t so off we go. Notice the slide above which says High Bandwidth Cache and Controller? That is the new name for HBM and a lot more that we can’t talk about because it isn’t on the slide. Keep this one in mind, it will be important later when we can tell you why, just not today.

Two slides later there is another bullet point with MxGPU SRIOV HW Virtualization, a real key killer app in this space. Some other unnamed GPU vendors charge a large adder for SRIOV even though their version is nowhere near AMD’s capabilities, and you can’t get it in the same card as the compute variants. With Instinct you can have your cake and eat it too, all at a discount price. Don’t underestimate the value of this to the big guys, this will save them more than enough money to justify the purchase of AMD kit over Nvidia, just ask Alibaba.

Just below that is the line Large BAR Support for MGPU Peer to Peer. BAR is Base Address Register but we can’t tell you why it is important for the same reasons as the HBC above. It is really important but why would you need a big address space? Partially because of the P2P functions listed above and unquestionably because of this. Killer app, trust us on this one, our earlier speculation was dead on.

The thing that differentiates an Instinct card from the rest of the AMD Pro, WX, and S lines, besides the price and spiffy yellow color scheme is the software. We already told you about ROCm a few weeks ago, and that is the beginning. For Instinct there is another stack called MIOpen, you can figure out what it means but like ROCm, Open really means open. Since it is open source, you can compile the MIOpen stack and run it on any applicable AMD GPU, they won’t stop you. The target market for Instinct is not going to do this, they more care about the service and support the new line brings. If you want to play around with bleeding edge AI though, any Pro card should be amenable with a little sweat equity.

What is MIOpen? Basically tuned libraries for AI which AMD claims an almost 3x advantage over running standard GEMM-based convolution code. AMD claims an MI8 running MIOpen will trounce a TitanX of Maxwell generation and beat the Pascal variant by a bit while the MI25 will almost double Maxwell’s numbers on DeepBench GEMM. Can you say memory latency or bandwidth bound benchmark boys and girls? The real test will be when Nvidia fixes their memory whoopsie and gets GP100 based cards on the market, then things should be more relative. Until then, AMD wins but both Vega/MI25 and GP100 should be released for real around the spring. Given AMD’s rate of progress, MIOpen is going to be a pretty interesting middleware stack by that time.

And now for a SemiAccurate tip of the hat to AMD’s marketing trolls or whomever came up with the MIxx naming scheme. It is going to cause a lot of trouble and mis-information over the short term because of questionable speculation. Lack of technical knowledge can be a dangerous thing and lack some people will. What are we talking about? The performance behind the implied, and in this case quite real, 25TF in the MI25 name.

Note: The following is for professional and student level subscribers.

Disclosures: Charlie Demerjian and Stone Arch Networking Services, Inc. have no consulting relationships, investment relationships, or hold any investment positions with any of the companies mentioned in this report.

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Nuvia Founders Have A New Startup, Nuvacore - Apr 16, 2026

- Nvidia Is Negotiating To Buy A Large PC Oriented Company - Apr 13, 2026

- Dell Does Modular Laptop Boards Right - Apr 7, 2026

- Qualcomm Snapdragon X2 Review Embargo Lifts Tomorrow Morning - Apr 6, 2026

- Intel Cuts HDR OLED Panel Power with SmartHDR - Apr 2, 2026