Today Qualcomm is unveiling the Snapdragon 835 in a little more detail than last time. SemiAccurate brought you the name a few weeks ago, now it is time to talk about the rest, or at least more of the rest.

Today Qualcomm is unveiling the Snapdragon 835 in a little more detail than last time. SemiAccurate brought you the name a few weeks ago, now it is time to talk about the rest, or at least more of the rest.

In mid-November Qualcomm outed the name Snapdragon 835 and process it is built on, Samsung’s ’10nm’. It all sounded good until we got to the cores, four big “Semi-Custom: Built on ARM Cortex Technology” cores and four little ones with the same caveat all called Kyro 280. The problem here isn’t with the quality or lineage of the chips, it is with the conspiracy theories that surrounded it, so that is where we will start.

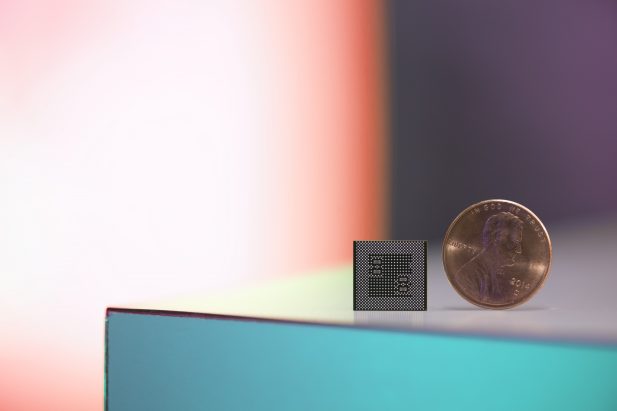

One is worth much more than the other

The cores themselves are heavily modified ARM Cortex A73s and A53s for the big and little respectively. Actually it would be more apt to call them based on the Artemis architecture. These are done under the “Built on” license where a vendor gives ARM a list of things they want changed and ARM does it to the normally black-box cores they license. If you think of this license as halfway between a normal core IP license and a full custom design, you would be close but it is much more toward the black box side of the offerings.

The reasons for this semi-custom design is that Qualcomm’s last custom core, the Hydra in the 820, has a lot of modifications that no stock ARM part has. These mods are mostly to decrease latency and power use as data moves between custom blocks like the ISP, GPU, DSP, and CPU. Think of them as efficient fast paths, but those are only the beginning, the rest falls into details and secret sauce categories. (Note: We probably shouldn’t say tighter memory controller integration, more outstanding memory requests, and several different buses, so we won’t.)

Before you dismiss these ‘little’ things, they are the difference between a good SoC and a crap one, they are the value add and make a huge difference for the user experience. If you didn’t have all these bits, your A/V experience would suffer badly and energy use would go up dramatically. Once these were implemented, starting in Krait and really taking off in Hydra, there was no going back. Remember the wonderful reception the 810 got, it was based on vanilla ARM cores more or less and was widely regarded as a regression in some ways. The built on Cortex program allows Qualcomm to avoid that pitfall by integrating the differentiating technologies found in Hydra without redoing the core from the core from the ground up.

Now the down side, the conspiracies. Lets get this out of the way up front, Qualcomm did go from a full custom core to a non-custom A73 variant in the 835. A lot of people are pointing to this as a regression, that the ARM cores are better than Hydra+1, Qualcomm can’t design a core anymore, and a lot of other similar things. The official line is that Qualcomm evaluates its options at every given node and picks the best one for each SoC.

This is quite true but unfortunately plays into the hands of the conspiracy theorists. Their best option was a modded A73 so Hydra suxx0rz and Artemis rulezzz! That isn’t close to the case and SemiAccurate can say with confidence that the decision was made on things not related to core performance, internal design capabilities, or things or a related nature. If Qualcomm wanted to do the 835 with a Hydra variant or successor, it probably would have been better than the result in the 835. If you take this as us saying we know exactly what happened but can’t say why, you would be right on, there was a damn good reason for the decision and it was the right one from our PoV. No we won’t elaborate on that any more, maybe in a few years.

So with the cores and conspiracy out of the way, the take home message is that the Kyro 280 in the Snapdragon 835 is two cores, a big based on A73 and a little based on A53, both heavily modded from their original state. The A73s run at 2.45GHz and have a 2MB L2, the A53s run at 1.9GHz and have a 1MB L2. This combo should be better than the Hydras found in the 820/821 SoCs but not as good as upcoming custom cores which we expect to see in future generations, they aren’t dead by any means.

From there everything else is updated as well, the modem goes to X16/GbLTE, GPU is now called the Adreno 540, DSP is updated to the Hexagon 690, ISP is a Spectra 180, and much more little stuff. All of these plus location, sensors, security, and the minor blocks require low energy, low latency passing of data which is why Qualcomm had to modify the ARM cores, vanilla would have fallen flat juggling all these bits.

Before we get into the pieces, let’s go back to the overarching theme of juggling disparate bits with regards to data flows. Qualcomm calls this task manager Symphony System Manager and in many ways is what differentiates their SoC from the vanilla Lego block designs of most commodity ARM SoCs. Symphony does this in the background, and it isn’t a specific block, more of an overarching paradigm.

If you don’t understand the importance of such managers, ask yourself the simple question of what happens when you take a movie. The camera pushes the data to the ISP, then the DSP for cleanup, then onto the CPU for tagging. You could add effects on the GPU, and all of this needs to happen when the display is on. Simple enough and everything is done on the highest performance, lowest power use blocks..

Now lets add in some more complex tasks like AR. The camera is on sending to the ISP, sensors are sending data to influence the viewport, the DSP is using its power to recognize faces, the radio is sending data to and from the cloud to add ‘intelligence’ to the scene, and so on. If the DSP is recognizing faces, it can’t clean up the image so that job could be done, albeit less efficiently by the GPU. But that is rendering the overlays for AR. And what about the display itself, GPU, CPU, or…? This all becomes a mess very quickly.

The above scenario is not contrived, check out how fast Pokemon Go sucks your battery dry in AR more if you don’t believe me, this is all happening now. It could all be done on a CPU too, but not in realtime and with AR, slight hiccups translate into an awful, sometimes literally sickening experience. Usually a programmer will handle the details, but what if two tasks are running at the same time? What juggles them? Does it do things in a power efficient manner, prioritize user experience, make sure realtime processes don’t drop critical data, or a mixture of the above.

Symphony does a lot of this juggling, programs do a bit as well, and if you use a modern phone, you never see any of this. Without the fastpaths between the units and the low energy direct data transfers, it may still work but your battery life would be a bit questionable. If you don’t have an uncore with very good firmware/software manager, it is not a device that gives a consistently acceptable user experience. Please do note that I rarely if at all mentioned performance of the units in the above rant, just integration and communication between them. Developers have control over this prioritization in Symphony and there are tools to help them with pointing the juggling in the right direction on the 835.

Moving back to the units themselves all are supposedly beefed up and the Adreno 540 GPU is near the top of the list. Since Qualcomm never goes into details about the unit itself it is hard to do much more than say it is better than the 820/821’s version. We can say the 540 has 10-bit data paths as standard and supports 10-bit color as standard, as do the DPU and VPU tied to them. Officially Vulkan, OpenGL ES, and DX12 are supported and the unit can do 4K HEVC at 10-bit depths.

One nice touch is something Qualcomm calls Q-Sync which will be familiar to PC gamers. It syncs the display of the device which are nearly 100% variable frame rate in modern high-end phones, to the GPU output. If the frame rates are matched there is theoretically no tearing along with a smoother display. You can still have problems if the GPU releases frames at really inconsistent intervals but that is a software/game engine problem.

Moving back to the hard stats, there are two data points we can mention for the Adreno 540. It can process 16 pixels per clock and is 25% faster on trilinear filtering than the older units. All this and more combines to a figure of 25% faster than the 820 and possibly at a lower energy draw too.

This is supplemented by the Hexagon 690 DSP which is the unsung hero of the modern phone in many ways. The big headline for the new iteration is support for scene based audio, but DSD format support and better SNR are welcome too. A bit more generic but likely far more important in the near future is a lowering of the latency for sensor data.

If you are doing VR, sensor to photon latency is the only real metric that matters. Qualcomm has taken a chunk out of this latency on the 690 as well as adding 6-DOF support as well. To make things easier for the developer, Qualcomm has two tools that tie into these DSP features, an SDK and a reference platform. The Qualcomm VR SDK is just what it sounds like and the Snapdragon VR 835 reference platform is a full VR rig that devs can modify and molest to their heart’s content. Expect this one to do what the past camera and watch reference platforms did for their respective markets, IE lead to an explosion of product over the next few months. Qualcomm claims 20+ designs are in the pipe already and we expect a few to debut at CES 2017.

That brings us to the Spectra 180 ISP. Headline numbers are dual 14-bit ISPs which can each support 32MP cameras or two 16MP cameras. Headline numbers aren’t the only thing added, new features are more important than the higher res starting off with better image stabilization (EIS3.0), Hybrid Auto Focus, 2PD autofocus support, and optical zoom.

The dual camera support is the most interesting because there are now several tricks that can be done with it. If you put in a color camera module and a second one with the color filters removed and computationally combine the image, you get a huge reduction in noise, vastly superior low light performance, and a lot of other benefits. It takes a lot of horsepower to do the compute but if you recall the DSP we talked about earlier…

Alternatively you could put a zoom lens on one module and keep the other one standard, then computationally combine the two to make a form of optical zoom rather than purely faking it as modern phones are wont to do. You could also combine the results to do computational stabilization better, and much more. This is how EIS3.0 can work at up to 4K resolutions, or at least part of how it can work.

Another part of that much more comes in with the hybrid autofocus. The Snapdragon 835 supports 2PD or dual phase detect AF which is coming on the new wave of 1.4 micron sensors. It splits every pixel into two parts and does phase detect on each half. The net result is much better than the traditional way of sprinkling 2PD sensors around the die to save area. In high light conditions, the 835 will use standard contrast based AF. At low light conditions, laser or IR AF is used, and somewhere in the middle 2PD is the method of choice. This all assumes the phone maker didn’t cheap out of hardware, but anything the 835 goes into will probably not fall into that category.

There are a lot more image related features that result from the added capabilities, combined use of units, and raw hardware power. Going into what units are responsible for what part of the jobs gets a little pedantic, but it is easy enough to understand that without the fastpaths above it would be hard to do any of these jobs in realtime. If you buy your device from a vendor that puts the time and effort into features and value added options, it is likely that these official functions are only the beginning of the list.

On the security front, the cameras connected to the 835 are able to use the secure path in the SoC so images can be ‘fully secure’. This may not seem very important to most users until you think about things like authentication and biometrics. Most vendors won’t consider a device secure without ARM’s Trustzone at a minimum, and if a camera isn’t trusted it can’t be used for inputs to secure software. Secure camera foundation means retina and iris biometrics are essentially available as options on snapdragon 835 devices. Unfortunately it won’t protect stupid people from doing stupid things with a camera, we haven’t progressed that far yet.

In a nutshell, that is the new Snapdragon 835. It starts out with ARM cores and modifies them to support the things that the older Hydra cores made necessary for modern mobile experiences. The 10nm SoC is more efficient as is the Kyro 280 cores, the Adreno 540 GPU, Spectra 180 ISP, and the Hexagon 690 DSP. The real value is in the uncore coördination and integration though, a lot of companies can slap similar units down and call it an SoC. Only those that sweat the details, integrate the components carefully, then add a lot of software and firmware end up with something that functions as a complete unit. From the looks of it, Qualcomm did just that with the Snapdragon 835.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Nuvia Founders Have A New Startup, Nuvacore - Apr 16, 2026

- Nvidia Is Negotiating To Buy A Large PC Oriented Company - Apr 13, 2026

- Dell Does Modular Laptop Boards Right - Apr 7, 2026

- Qualcomm Snapdragon X2 Review Embargo Lifts Tomorrow Morning - Apr 6, 2026

- Intel Cuts HDR OLED Panel Power with SmartHDR - Apr 2, 2026