![]() AMD just took their commanding lead in the workstation market and expanded it with the new Threadripper Pro. Semiaccurate exclusively told you about this part last November but the details are what make it interesting.

AMD just took their commanding lead in the workstation market and expanded it with the new Threadripper Pro. Semiaccurate exclusively told you about this part last November but the details are what make it interesting.

We got it wrong, the 8-Channel Threadripper 3 Pro didn’t launch at CES as we predicted but it is everything we said it was. If you are looking for a cross of the best features of Threadripper with the best features of Epyc, the WRX80 is the platform for you. Lets start out with a look at what this new platform brings to the table.

AMD vs AMD vs Intel

The ‘old’ Threadripper 3 (TR3) beat the best Intel had in every way other than memory channels, max memory support, and manageability. In every other way, TR3 comprehensively trounced Intel and the real world performance gap was much greater than the numbers suggested. Those missing features could be had with a single core Epyc CPU but that was officially frowned upon in the same way that Intel looks askance if you put a Xeon sans -W in a workstation.

PCIe4 made AMD’s TR3 a winner if you needed high speed storage or networking, plus multiple video cards also work better on Threadripper. Other than a few corner cases, the workstation market wasn’t a fair fight, it really wasn’t even a fight. WRX80 doubles the memory capacity of the best Xeon, adds 33% more memory capacity at higher speeds than Intel, and turns on manageability and memory encryption too. With the WRX80 platform release there is nothing Intel does better in the workstation market.

The SKUs in question

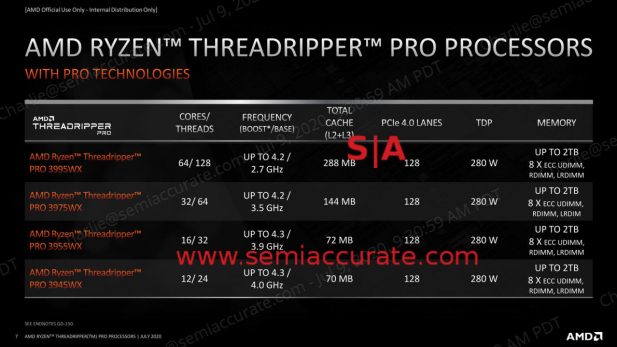

In contrast to Intel’s blizzard of pointless SKUs on three incompatible platforms, AMD has four offerings which all have the complete feature set. Intel’s solution quagmire is a minefield of fused off features making it impossible to get anything close to what you need at a price that has the same number of digits as AMD’s offerings. That said these new Threadripper 3 Pro CPUs are OEM only so there is no MSRP, but even if AMD doubles the price vs the vanilla TR3 it will still be less than half the cost of the two Xeon Platinum 8280s that it beats in most benchmarks.

What is interesting is the SKUs AMD picked and how many die they each have. The 64C 3995WX is a given as is the 32C 3975WX, but the lack of a 48C offering is a bit unusual. That said there is a hole in the numbering scheme that saves room for that core count, if you want to buy 10 or 20K units, I am sure AMD will happily sell them to you.

The lack of a 24C is also a bit unusual but the 16C 3955WX is right where you would have predicted a part would go, and again there is a numerical hole for a future 24C part. The most interesting is the high clocked 12C 3945WX which has a pretty staggering base clock of 4GHz. If you want consistent single threaded performance, this is the SKU for you.

Lets take a look at what AMD is saying with each of these four parts. The 32C is the mainstream, a good balance between memory bandwidth, core counts, clock speeds, and I/O. The 64C 3995WX is a bit memory bound for mainstream workloads but if your application is more compute focused, 3D rendering comes to mind, this part will shine. For average use or some types of HPC workloads, it won’t help nearly as much as you would expect from 2x the cores.

The 16C and 12C parts are the interesting ones, aimed squarely at heavy single threaded workloads and applications that are priced by core count. The former category is very large, the latter much smaller than most people believe it to be, but both of these TR3Pro offerings hit the sweet spot for these niches. That 12C SKU was added to the pro line to max out single threaded apps at a decent price, eight memory channels and 128 PCIe4 lanes mean no system bottlenecks either.

Most interesting however are the die configurations. As you know AMD’s Rome architecture has nine chiplets in an 8+1 configuration, eight CPU dies (CCX) and one I/O die (IOH). The 64C and a hypothetical 48C would be the full eight CPU CCDs with eight and six active cores each respectively. The 32C version could be four CCDs with 8C each or eight CCDs with 4C each, in this case AMD chose to use four fully populated CCDs. SemiAccurate would have preferred eight CCDs of 4C for thermal, cache, and I/O reasons but there could be a good reason to go the route AMD chose. We won’t say margins out loud though, it would be unseemly. We will say cache locality though, but that is pretty minor for most workloads.

The same holds true for the 16C and 12C variants, they each have only 2x CCDs with 8C and 6C respectively. Our hope is that someday AMD will release a 16C 8x CCD TR3 Pro with a higher TDP ceiling and base clocks to match. Why? 4x the I/O between the CCDs and the IOH, better distributed thermals, cores chosen for top speed from a higher pool of candidates, and much more cache, 4x the 3955WX to be exact. We can dream, can’t we?

So how well does AMD’s new Threadripper 3 Pro perform? Since AMD once again refuses to do the right thing and disclose their test settings, we think their numbers are worthless and won’t repeat them here. Why AMD is regressing so badly on this front is beyond us, and it is especially concerning after they bitch publicly when Intel misses a single data point in their disclosures. AMD really needs to get with the game here, this has gone on for far too long. All this said we don’t have any issue with AMD’s claim that they beat Intel’s Xeon at just about everything, and do so handily at a much lower cost.

After all this wall of text we come to the interesting part, the details. AMD is turning on three things officially that make TR3 Pro better than the TR3. Memory channels is the obvious one and PCIe lanes are next up, but those are obvious. The next group, manageability, encryption, and platform stability are the important ones which we are counting as one in case you are paying attention.

Manageability is obviously important, Intel has their vPro management suite and AMD needs to include theirs if they want to play in this space. That is now done, or at least not undone as it was intentionally removed in TR3. Similarly the platform stability enhancements, 18 months of stable drivers and 24 months of availability, mean a lot to the workstation market, especially those working with software that needs platform certifications. Without stability and manageability, few large OEMs are willing to expend the effort to certify a new CPU so this is more critical than you imagine.

The most important bit is the memory encryption called AMD Pro Security. SemiAccurate went into detail about the last version here, and Rome’s suite adds features but not large categories of functionality. While it does take a minor 1-2% performance hit, AMD can fully encrypt memory on the fly making many types of attacks pointless especially in multi-tenant/VM environments. The workstation market tends to take security very seriously and AMD is the only company that has this capability.

Intel claimed to have something similar with Ice Lake-SP but if you read the white papers, Intel’s solution is not even in the same ballpark as AMD’s last generation. And Ice Lake-SP still isn’t out, Rome has been out for a year. When Intel’s Sapphire Rapids comes out in 2022, it may catch up to AMD’s Naples on this front but AMD will have two more generations of progress on the market by then. SemiAccurate doubts Intel can catch up on security in the next three years, and it is a critical selling point for many buyers.

More important than base memory encryption is AMD’s encryption of VMs, something that is less important to the workstation markets TR3Pro targets but still is useful for many buyers. AMD can transparently spawn and encrypt VMs without the VMM being able to see the key, basically your hosting provider can not see your data so if you use a TR3Pro or Epyc in a hosted fashion, your data safe. This is critical and Intel simply can not offer this now or in 2021.

Last but not least Intel recently put out a very disingenuous white paper meant to use security as wedge on Thunderbolt. They strongly imply that only Intel’s VT-d can secure Thunderbolt’s egregious DMA holes so don’t buy anything else. We asked, AMD will do the exact same thing, likely better due to the memory encryption barriers they can deploy, with AMD-Vi. Don’t believe the hype.

Moving away from security and back to the wish list feature set we come to an item that is enabled on Threadripper 3 Pro, no ECC. It may seem odd that the lack of ECC is a feature but you can’t turn it off on Epyc as far as we are aware but you don’t have to use ECC memory on TR3Pro. Why is this important? Imagine a high clocked 12C gaming rig with 8-channel DDR4/3200, 128 PCIe lanes, and a 280W TDP out of the box. That could be a lot of fun, especially if OEMs enable overclocking features on their workstations, hopefully at least one will.

So in the end what do we have? Threadripper 3 Pro adds corporate and management friendly features to an already dominant workstation platform. The added memory channels and PCIe4 lanes are just icing on the cake, the security and remote management are what will really sell chips. Given the state of Intel’s server program, it is unlikely that there will be real competition for Threadripper 3 Pro for the next 18-24 months. AMD won last fall with Threadripper 3, Pro is just more of a good thing.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Nuvia Founders Have A New Startup, Nuvacore - Apr 16, 2026

- Nvidia Is Negotiating To Buy A Large PC Oriented Company - Apr 13, 2026

- Dell Does Modular Laptop Boards Right - Apr 7, 2026

- Qualcomm Snapdragon X2 Review Embargo Lifts Tomorrow Morning - Apr 6, 2026

- Intel Cuts HDR OLED Panel Power with SmartHDR - Apr 2, 2026