![]() Yesterday Multicoreware announced x265, the H.265 encoding successor to their x264 H.264 encoder. To go along with it there is a decoder called UHDecode but that one is far less interesting.

Yesterday Multicoreware announced x265, the H.265 encoding successor to their x264 H.264 encoder. To go along with it there is a decoder called UHDecode but that one is far less interesting.

If you are not familiar with Multicoreware, they make software stuff for parallel and heterogeneous compute environments. By stuff I mean codecs, tools, cross-platform thingies, and other related widgets that make some very complex problems much easier. It is the kind of company that you either need much more of what they make or you have never heard of them.

Lets step back in time a bit and talk about what H.265, HEVC, and several related bits are so you understand why it is so complex. H.261 aka MPEG-1 was the audio format for CDs way back in the dark ages. MPEG-2 is also known as H.262 and the audio only H.263 is commonly called MPEG-4 part 2. I’ll bet you were going to say MPEG-3, right? Next up was MPEG-4 part 10 aka H.264, and yes the people naming this should never have children for good reason. Now we are at H.265 aka HEVC with no MPEG, part or not, attached.

There are two main goals for H.265, make the encoded stream half the bandwidth of what H.264 was for the same quality and to put the compute burden on the encode side, not decode. As you probably understand, there is a lot more decoding done than encoding, how many times is a movie mastered vs played back? This shift makes a lot of sense for net based video and mobile device playback, both of which are unquestionably the future.

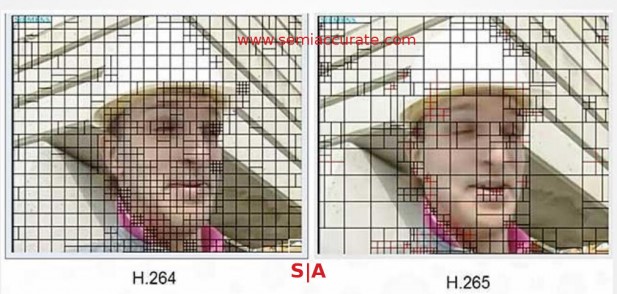

How H.265 does these things is a multipart process? The main way is to update the encoding process to be much more efficient. This falls under two main categories, macroblock size and more precision in said blocks. Multicoreware has an excellent slide deck with some of the process explained, the following pictures are from their presentation.

Blocks and more blocks but bigger blocks too

Although it may be counter-intuitive, making larger macroblocks can be more efficient. If you have a large patch of very consistent color in your image, say a logo or the side of a car, the smaller the biggest block is, the more information that has to sent. Saying an 8×8 block is color XYZ in position A takes ~4x less data than having to say there are four 4×4 blocks of color XYZ at positions A-D. This is an oversimplification but you get the idea, things that don’t need high detail can be optimized much better with larger block sizes even if they bring other types of overhead.

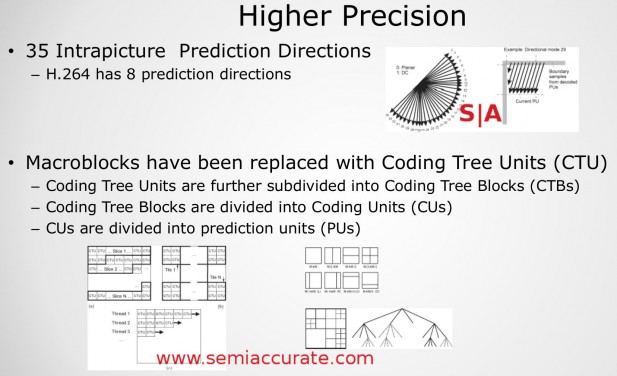

H.265 prediction directions and coding trees

Some of this complexity is pictured above starting with the prediction directions. You might recall H.264 had eight directions to move a block, something that plays well with fixed or multiples of a fixed block sizes. H.265 does away with many of these block restrictions and completely rethinks the prediction directions. There are now 32 different modes and if you look at how they are possibly oriented, you can also get a good idea about how software is meant to parse an image, top left to bottom right. Having 4x the prediction directions means far more than 4x the compute is needed to encode but the resultant output is a far more efficient stream.

Similarly the macroblocks are not just possibly larger at times, each one is more complex. Now called Coding Trees, each of these are broken down into three more subtypes that can encode a complex scene much more efficiently. Somewhat counterintuitively they can also encode a less complex scene more efficiently too, if you understand binary trees you will get this part. If you don’t, now you have something to do this weekend, educate yourself on the topic!

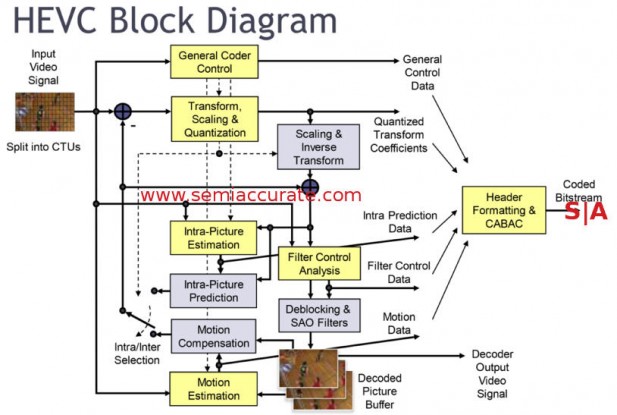

Easy enough, see?

In the end you get something that is horribly complex and time-consuming, even the block diagram of the process is complex. Multicoreware says that HEVC requires about 5-10x the compute power of H.264 which is not light duty in it’s own right. Also keep in mind that the overhead is for the same resolution, if you are talking 4K, multiply that by 4x. 120Hz, stereo, large color gamuts, 10+bit color, or any of the other upcoming features that UHD may bring will only add to the total, so think massive compute costs to encode.

Given how much UHD video will be streamed in the coming years, efficiency is of paramount importance to most people paying for bandwidth. That would be just about everyone and Multicoreware says their H.265 encoder implementation called x265 is the most efficient out there. Based on their H.264 encoder performance we have no reason to doubt this claim.

Yesterday’s release was officially about a bundling of their x265 encoder and the UHDecode program as a consumer product. The result is called x265 HEVC Upgrade and only runs on 64-bit Windows systems. The 64-bit requirement is because the encoder actually runs out of memory space on 32-bit systems, it is really that complex of a problem. The Windows side of things is because this version is compiled as a DirectShow filter so the platform is kinda locked down.

Luckily for users Multicoreware products are easily accessible, in fact you have probably been using them for a while without knowing it. The x265 product is mainly x86 focused and they test it on Linux, Mac, and Windows. The decoder called UHDecode is far less compute intensive as we mentioned above and works on ARM and x86 CPUs and is supported on Linux, Mac, Windows, Android, PS3, and XBox 360, essentially everything that can benefit from it. Much of both products is hand-tuned assembly code that includes all the latest ISA extensions including AVX2.

The reason we say you have probably been using their code is that x265 is incorporated into a few obscure video projects here and there. These include VLC, FFMPEG, and Handbrake, in other words the three that matter. In case you are wondering how this works, x265 is dual licensed, GPL2 and commercial, pick the one you want for your project. If you are selling a product, the choice is just as clear as it is if you are making an open source program. If you are encoding H.265, chances are you are using Multicoreware products.

On the decode front things are not as open, the UHDecode line is split into pro and consumer but neither are open source. Consumer is 8-bit, pro is 10-bit, but all require a commercial license. Since encoding is the harder and more critical of the two, this licensing choice is a bit of a head-scratcher but we will take it either way. At least you know who to thank for the quality H.265 encoder you are probably using.

So that is the long and short of it. H.265 is enormously complex on the compute front, just keeping track of it is headache inducing but Multicoreware has done the heavy lifting already. If you want to encode something in H.265 with their stuff, the market leading and obvious choices to do so are all free to use, go give it a shot. If you want to decode chances are your devices will do it already, if not you can buy a copy of UHDecode from Multicoreware. If the system requirements make your eyes water, at least you now know why, there is a lot of work being done under the hood.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Nuvia Founders Have A New Startup, Nuvacore - Apr 16, 2026

- Nvidia Is Negotiating To Buy A Large PC Oriented Company - Apr 13, 2026

- Dell Does Modular Laptop Boards Right - Apr 7, 2026

- Qualcomm Snapdragon X2 Review Embargo Lifts Tomorrow Morning - Apr 6, 2026

- Intel Cuts HDR OLED Panel Power with SmartHDR - Apr 2, 2026