Intel is giving us a whole host of new server goodies from GPUs to software stacks. Normally SemiAccurate focuses on the hardware but the today the software is of higher import.

Intel is giving us a whole host of new server goodies from GPUs to software stacks. Normally SemiAccurate focuses on the hardware but the today the software is of higher import.

A few weeks ago Intel launched their Iris Xe GPUs with the Tiger Lake CPUs and have since added several discrete options and different consumer flavors to the lineup. Today they move that same silicon to the server side with the H3C’s XG310 quad-GPU card. We’ll wait while you make “ooooh” sounds looking at the picture below.

The Intel XG310 quad-GPU board

Each XG310 has four of the discrete Iris Xe Max GPUs with 8GB of LPDDR4-2133 memory per chip so 32GB total for the card. Each Server GPU, yes the product name is “Server GPU”, pulls 23W max and runs at 900MHz/1.1GHz base/max boost. In the server world you normally clock things down a bit for reliability and longevity and since this GPU has a 5 year duty cycle, Intel did just that. A bit more interesting is the fact that the card appears to the system as four discrete GPUs, something that would be sub-optimal in the consumer space. In the server space where the prime workload is streaming games and video, that is irrelevant because those applications are almost trivially parallelizable.

The Intel XG310 quad-GPU card

As you can see from the card it is a server part so no fans, airflow is provided by the chassis fans. Intel suggested that most customers are likely to buy these as part of a system with the whole package pre-validated by the OEM, in this case H3C. The XG310 has a 150W TDP but the 8-pin adds 150W to the 75W from the slot so 225W is the cap. Given the cooling on the card and the conservative nature of the market, we don’t think it will pull anything near that number but if Tencent decides to OC their datacenter, it appears the XG310 has headroom. Don’t wait up for this eventuality.

Remember OneAPI? In the days of the ‘before time’, specifically Supercomputing 2019, Intel launched a new software framework to pull all their disparate efforts together and support all of their hardware offerings with one codebase. This is a monumental undertaking and in just over a year, December to be specific, Intel will launch the Gold version, OneAPI 1.0. That is an impressive effort for a single year even if they continue to conveniently ‘forget’ to support the iAXP 432.

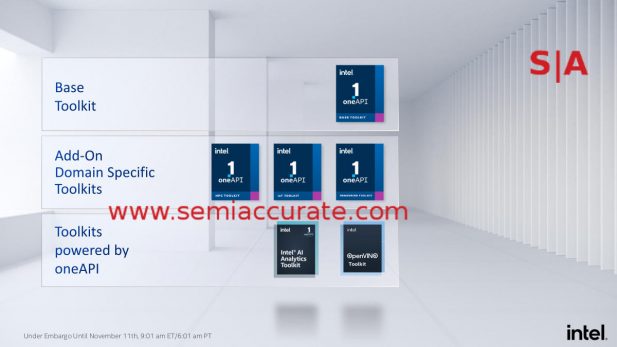

OneAPI has a lot of pieces

A big change to OneAPI is that while it is open source, the Gold release will have commercial support options available as well for those that want it. The base package is supplemented by three domain specific toolkits, HPC, IoT, and Rendering, plus two toolkits with a ‘powered by OneAPI’ tag, AI Analytics and OpenVINO. The commercial support is important because the base OneAPI + HPC Toolkit effectively replace Parallel Studio XE and OneAPI + IoT Toolkit replace System Studio, both of which have commercial support now.

If you want to play with any of this but don’t have the hardware you want to target handy, you can do it on Intel’s DevCloud. Xeon, FPGAs, and Iris Xe Max GPUs are available, and should you sign a phone book of NDAs, Xe-HP GPUs are lurking there too. It is a wonderland for those that like to code for Intel products, plus the price, free, is pretty attractive as well. If you are as skilled a coder as the author, within hours you can have “Hello world” spitting out compiler errors left and right, people with coding skills will have a much easier time though. Seriously though this was a big effort and getting to Gold in just over a year is quite impressive.

Going back to GPUs, specifically Intel’s Linux drivers, we have a lot of progress. Before you ask why they are bothering, think about what the cloud runs on, it isn’t Windows. Intel started moving their graphics drivers to a single code base across OSes in 2018 and saw about 10% code reuse. In 2020 that is now up to 60% so the development effort is really paying dividends, especially if you think about how much money that saves. Performance went from about 60% of Windows to 90% on graphics, 100% on compute with three fully validated distros up from one. This makes us very happy.

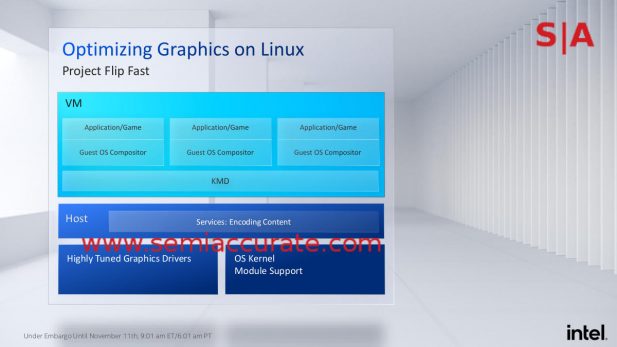

Project Flipfast was unexpected

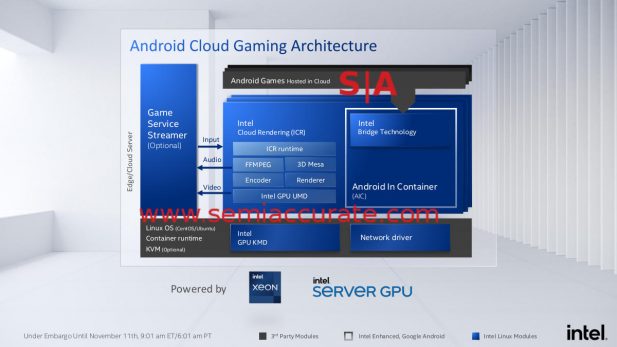

One of the most interesting features revealed today was Project Flipfast. This is a software effort that allows end users to run GPU based applications in a VM at native performance levels. Flipfast supports native VM integration of a GPU and allows zero-copy data transfers between the VMM and VM, something that should be a boon for performance. This is extremely important for cloud hosting of games and video streaming, you know the thing that the XG310 was literally made for.

The software stack to make it work

Since graphics applications like remote gaming are moving to the cloud with increasing rapidity, taking down the overhead of software pays big TCO benefits. As you know in the server world, TCO is closely related to the ASP a vendor can charge. Less overhead almost directly translates into more money for the hardware vendor so you can see why Linux drivers are getting so much attention at Intel.

And it all results in….

To wrap it all up, you can see above that a single server with two XG310s can support 120 users of Tencent’s two most popular streaming games in 720p-30 with a single dual card server. Intel provides the hardware and now the software to tie it all together in a single pre-validated unit powered by a single development environment. Lowering overhead means more users per box which lowers TCO. It is a virtuous cycle that explains why Raja Koduri started out the briefing with the idea that Intel’s strategy is now “Software First”. Makes sense to SemiAccurate.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Nuvia Founders Have A New Startup, Nuvacore - Apr 16, 2026

- Nvidia Is Negotiating To Buy A Large PC Oriented Company - Apr 13, 2026

- Dell Does Modular Laptop Boards Right - Apr 7, 2026

- Qualcomm Snapdragon X2 Review Embargo Lifts Tomorrow Morning - Apr 6, 2026

- Intel Cuts HDR OLED Panel Power with SmartHDR - Apr 2, 2026