On Tuesday AMD announced Rome, the 7nm 64-core 9-die monster that SemiAccurate exclusively brought you months ago. Other than a massive performance gain, a bit better than we revealed earlier, the news was expected but impressive.

On Tuesday AMD announced Rome, the 7nm 64-core 9-die monster that SemiAccurate exclusively brought you months ago. Other than a massive performance gain, a bit better than we revealed earlier, the news was expected but impressive.

When SemiAccurate said, “Intel has no chance in servers and they know it“, people scoffed at our numbers. They couldn’t really be _THAT_ good, SemiAccurate is shilling, and lots of other sniping from the trolls was heard. Strangely all those voices are silent now that AMD has revealed the chip and a bit about it’s performance levels. When we said we were understating the performance advantage AMD had in that article, people didn’t believe it. Now they have seen this.

Yes that is really my card

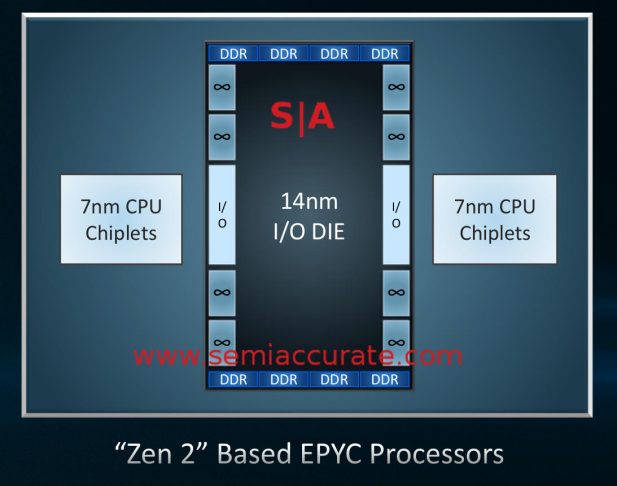

Rome is exactly what we said it was in July, a monster with nine die, eight 8C CCXs on 7nm, and one IOX built on 14nm. While our initial speculation on interconnects was incorrect, we recently got more details and added a few bits that are still not public. If you look at the layout, it is clear AMD did the right things for the right reasons.

Only missing six bits

AMD used 7nm on the CCXs for cores ending up with dies in the 70-75mm range there, about comparable to a modern cell phone. Yields should be pretty spectacular and assuming there are some SKUs with disabled cores like the current Epyc, repairability should be very high indeed.

Just to show they really thought things through, the IOX chip is 14nm. Why? Cost to start out with, 14nm is much cheaper than 7nm and as the IOX name suggests, it is IO heavy. Much of what is on that dies doesn’t shrink very well so in essence AMD took the parts that would benefit least from a shrink and simply didn’t shrink them. This ups yield, decreases cost yet again, and most importantly doesn’t suck up precious 7nm allocation.

AMD should be able to crank out 7nm Rome CCXs at enough volume to satisfy a large portion of the market. The chiplet strategy allows them to mix and match process nodes to the greatest effect and AMD did that masterfully here. Better yet it buys them 33-50% more chips per 7nm wafer. If there is a down side, I am not seeing it.

Rome triumphs

Then there is performance. AMD only demo’s trivial scaling benchmarks, benchmark actually because only C-Ray was shown. That said one single AMD Rome tied a dual socket Naples Epyc CPU for performance. If you read from this that Rome will have 2x the performance of Naples, you are probably a little over excitable. It will be vastly higher but not 2x across a blend of real world workloads. SemiAccurates earlier conservative estimate was 1.7x but it will be higher than that.

Similarly AMD doubled the instruction widths internally for the new Zen2 cores. This is because the AVX resources are doubled but AMD would not say whether it was a doubling of the physical widths or doubling of the unit count. In any case throughout the core what was 128b is not 256b. To aid this, load/store bandwidth was doubled and dispatch/retire bandwidth was increased but not doubled. The net result is a 2x or so FP performance increase per core and a near insane 4x FP increase per socket. In a world of claimed but not real world achievable 10% yearly updates, numbers like 2x and 4x don’t happen often.

On the front end there were a bunch of updates too, mostly because you need to feed the ALUs to get anything meaningful from them. The branch predictor is improved as is pre-fetching, both of which can make or break a core. The Op caches are now larger and the I-caches are ‘re-optimized’. AMD did not go into details on what exactly this means, but you can see the results are pretty good in at least one benchmark.

On the security front things are incrementally better, something we say because AMD is head and shoulders above Intel here. Intel has SGX which does nearly nothing at a high cost, has been comprehensively broken, and provides a consumer no real protections. AMD has full transparent memory encryption, can secure VMs from the host, and many other useful things that protect users in the real world. Better yet they are enabled with a BIOS switch and have a tiny overhead.

This time around AMD didn’t add very much but there were some hints that more will be revealed when the full technical disclosure on Rome is done. Officially there is now more space for keys so you can support more encrypted memory VMs per box. The other big box to check is Spectre mitigations are now rolled in to the core but Meltdown and L1TF/Foreshadow are not. Why? Because AMD wasn’t affected by either one and never will be. Patching can work but to be immune from the start is always a better choice.

Getting back to the Rome package again there are a few details left to talk about. The CCXs connect to the IOX via an Infinity Fabric (IF) link. This is point to point and the CCXs do not talk to each other directly, all communication is through the IOX die. That die has all the connections to memory, PCIe, and other sockets on it. All of this is placed on an organic package, not an interposer like some have suggested.

So in the end the numbers for Rome are still a bit unclear. The core count doubled, the FP resources have doubled, the internal data paths have doubled, and lots of other detail changes. The memory channels have stayed the same though and so for anything but trivial benchmarks, you are unlikely to get a 2x increase in performance. But you will get well above 70% more performance out of a Rome which puts it significantly ahead of Intel’s Ice Lake architecture which comes more than a year later. Better yet AMD has PCIe4 on Rome, something Intel won’t have until mid-2020.

AMD is on track to deliver Rome in Q2 of 2019. When SemiAccurate said it was a monster, we weren’t kidding, Intel has nothing to answer this with and won’t until 2022. By then AMD will have two more generations out, assuming both sides execute their roadmaps perfectly. Until then, Intel has no chance in servers and they know it.S|A

Charlie Demerjian

Latest posts by Charlie Demerjian (see all)

- Nuvia Founders Have A New Startup, Nuvacore - Apr 16, 2026

- Nvidia Is Negotiating To Buy A Large PC Oriented Company - Apr 13, 2026

- Dell Does Modular Laptop Boards Right - Apr 7, 2026

- Qualcomm Snapdragon X2 Review Embargo Lifts Tomorrow Morning - Apr 6, 2026

- Intel Cuts HDR OLED Panel Power with SmartHDR - Apr 2, 2026